Evolution of GPT Models: GPT-1 to GPT-4 and Beyond

- pradnyanarkhede

- Mar 11, 2025

- 5 min read

By Vedant Maldhure, Smera Nimje and Shibu Rai

Imagine a world where computers think, create, and converse like humans. Sounds like science fiction? Guess what? Generative Pre-trained Transformers (GPT) made it happen! OpenAI has been revolutionizing natural language processing (NLP) with its series of GPT models, pushing the boundaries of artificial intelligence. So, how did we get here? How did an unassuming AI evolve into one that can produce music, code, and even have smart conversations?

Let's take a walk through time and observe how these models evolved, from the humble beginnings of GPT-1 to the groundbreaking capabilities of GPT-4—and beyond

The History and Development of GPT Models:

The development of GPT models is evidence of the rapid advancement in artificial intelligence and deep learning. Generative Pre-trained Transformer, abbreviated as GPT, was first released by OpenAI in 2018. It employed a deep learning framework known as the Transformer to tokenize text in a more contextual and effective manner than previous NLP models. The GPT models have grown exponentially in size and capability over time to become among the most potent AI language models ever developed.

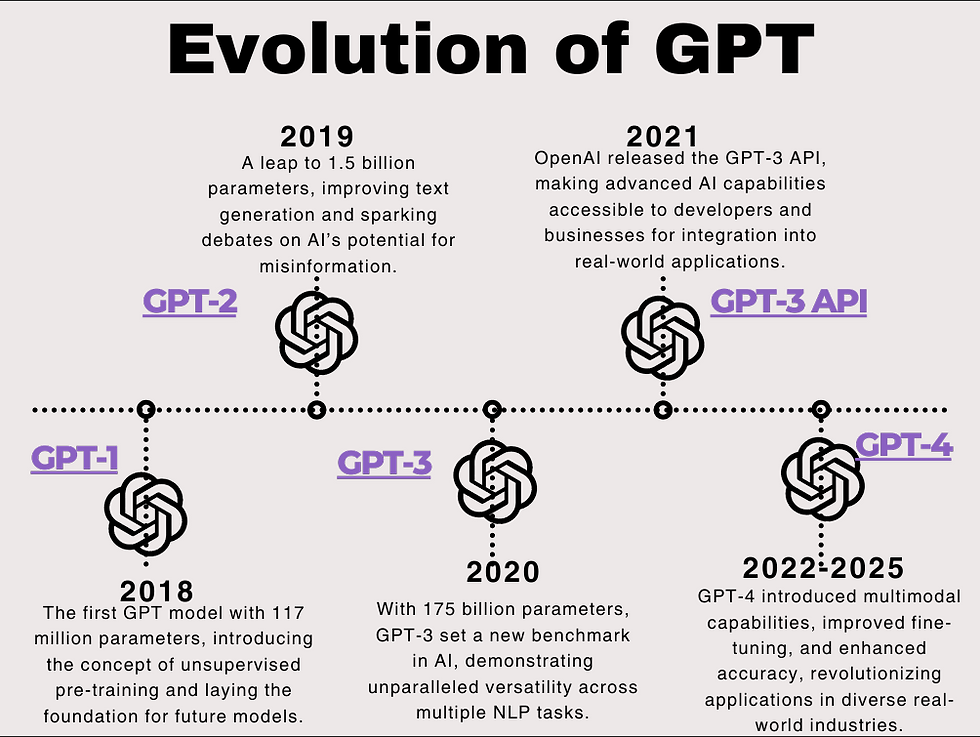

A Timeline of GPT Advancements:

GPT-1 (2018) - was released, proving that transformers can outperform the default NLP models and paving the way for text generation with AI.

GPT-2 (2019) - made its way to the scene, significantly improving fluency and coherence but drawing a dark cloud over the possibility of AI misuse as it could produce human-like text that was seemingly realistic.

GPT-3 (2020) - introduced AI capabilities to new heights with 175 billion parameters, demonstrating technical and creative capabilities that revolutionized content creation, coding, and customer service sectors.

GPT-4 (2023) - arrived with improved reasoning, multimodal capabilities, and fact accuracy, taking AI interactions even more towards natural and seamless.

GPT-5 (Future) - and beyond are being researched by scientists, with an aim towards improved human-like interaction, real-time learning, and future advancements in AI consciousness.

Each step has introduced more smarts, context, and usability, so AI is now an integral part of today's digital interactions.

GPT-1: The Dawn of a Revolution (2018)

GPT-1 was the initial step taken by OpenAI towards its lofty aim of developing an AI that was capable of producing human-like text. It was created with 117 million parameters and learned from a massive database of books. Although in its limited capacity, GPT-1 established that unsupervised learning could be employed to train models that produce readable text.

Before GPT-1, AI systems relied almost exclusively on supervised learning, requiring labeled data to understand context. GPT-1 changed this by employing a transformer-based model, which allowed it to predict the next word of a sentence based on the analysis of massive amounts of text. This was a breakthrough in language modelling and set the stage for more sophisticated AI systems.

Key Highlights:

First unveiled in 2018 by OpenAI

Working on 117 million parameters (tiny by modern standards, but groundbreaking at the time)

Fed on a giant corpus of books in an attempt to learn about language understanding

Demonstrated the promise of unsupervised learning, in which AI can learn patterns directly from raw text data without exposure to human example

Could already produce decent yet still awkwardly phrased writing.

While GPT-1 demonstrated the potential of transformers to process and generate text far better than prior models, it lacked deep contextual comprehension and inclined to produce standalone responses. Its deficiency gave way to the succeeding model—GPT-2.

GPT-2: The Model That Almost Didn't Get to See the Day of Light (2019)

OpenAI took the AI text generation a big step forward with GPT-2. It trained the model using a much bigger dataset and had 1.5 billion parameters, which made the generated text far more natural and flowing. Its potential, however, caused it to raise some ethics concerns, and OpenAI was first reluctant to release the complete model to the public because of abuse issues.

GPT-2 represented a significant advance over GPT-1 in that it had the ability to generate more natural and coherent-looking text. It had great ability in storytelling, content generation, and even elementary problem-solving capabilities. Its capability to generate seemingly real fake news and propaganda led to concerns around responsible AI design and deployment, though.

What Made GPT-2 Special?

1.5 billion parameters – 10 times larger than GPT-1, hence making its text generation much more natural

Trained on WebText, a corpus of millions of web pages, so it can mimic numerous writing styles

Able to create entire articles, poems, and even short stories with very little input

Shown excellent zero-shot learning ability, i.e., it was able to perform tasks without having to be explicitly trained on them

Despite having concerns, OpenAI later publicly released GPT-2, which further generated extra research into AI-generated text. However, GPT-2 still lingered in grappling with factual accuracy and coherent text over longer forms. This is where GPT-3 arose from.

GPT-3: The Game-Changer (2020)

GPT-3 was a game-changer model that introduced a new level of fluency in language and understanding context. With 175 billion parameters, GPT-3 had the capability of generating text which was almost unrecognizable as not being human. It found extensive use across applications from chatbots to content generation and program assistance.

GPT-3 changed the game for AI interaction because it was now capable of assisting with real-world tasks such as writing an essay, fixing bugs in codes, and even writing poetry. It also powered AI-driven customer support engines and learning tools.

Mind-Boggling Capabilities:

175 billion parameters – a new record, 100x larger than GPT-2!

Could assist in doing complex tasks such as coding, writing poetry, composing music, and even creating medical recommendations

Powering AI chatbots, content creation tools, and much more

Deployed the API to developers, leading to a proliferation of AI apps by sectors

Few-shot learning skills were also displayed by GPT-3 because it was in a position to generate correct output from simple examples. While still having issues with factual accuracy, it required the development of advances in context sensitivity, and therefore the scene was set for GPT-4.

GPT-4: The Smartest AI Till Now (2023)

GPT-4 introduced multimodal support, i.e., it was now capable of processing text and images. It was a giant leap forward as GPT was only available as text processing prior to that.

GPT-4 set the bar higher for AI minds by improving its sense of humor, sarcasm, and even emotional undertones in the language. Firms started integrating GPT-4 with virtual assistants, research tools, and creative writing platforms.

What Makes GPT-4 Unique?

Multimodal abilities – Unlike previous models, GPT-4 was trained to understand text and images, allowing it to read charts, diagrams, and pictures

Better contextual understanding, allowing for more logical and correct answers

Increased fact-checking accuracy, reducing AI hallucinations and making it more credible

Better imagination and reasoning, making it an ideal tool for writers, coders, and scholars

GPT-4 showed a much-improved grasp of nuance, sense of humor, and emotional intelligence, thus being a better AI companion. But research on AI does not end with GPT-4. Future generations hold much promise.

From the humble text predictions of GPT-1 to the multimodal brilliance of GPT-4, the path of GPT models has been nothing less than remarkable. With AI advancing every day, it's our turn to use it responsibly and leverage its power for the greater good.

What do you think about the future of AI? Will it be a tool for good, or could it become too powerful? Drop your thoughts in the comments!

If you found this blog insightful, hit that like button and share it with your friends!

Nice work!

Great work done 👍

Good job 👍🏻

Such a well-researched and informative post, really helpful!

GREAT WORK !!