Transfer Learning in AI : Making Models Smarter with Less Data (Because Who Has Time for All That Data?)

- pradnyanarkhede

- Mar 12, 2025

- 5 min read

Updated: Mar 12, 2025

Introduction: The Struggle is Real

Okay, let's set the scene. You are a machine learning engineer working on an interesting project; you are going to teach your bright fresh model. But then, you realize something: you don’t have enough data. Sound familiar? It’s like trying to bake a cake with no eggs — it’s just not going to work.

You could now go out and compile a large dataset (and spend the next six months classifying images of dogs, cats, and maybe an occasional hamster), but what if I told you there was a way to bypass all that hard work? Enter transfer learning: the machine learning super hero that saves time, effort, and creates less data-dependent, smarter models. 🦸♂️💡

What is Transfer Learning?

Okay, let’s break it down. Imagine you’re a person who’s already expertly fluent in three languages, and someone asks you to learn a fourth one. Easy, right? You don’t have to start from scratch. You can just apply all that knowledge from your first three languages and learn the new one much faster.

That's what transfer learning accomplishes in the field of machine learning. This is the method in which we fine-tune a pre-trained model—one already learnt from a sizable dataset—for a new job using a smaller dataset. This is like starting your machine learning trip with a head start instead of having to create the wheel.

As an illustration: Millions of pictures of dogs, cats, and pizza—yes, pizza qualifies as an object—have been trained on your model. Now you want that model to identify images of sushi. Transfer learning lets you avoid thousands of sushi photos. You simply fine-tune your model with a few hundred sushi images, and presto — your model is suddenly a sushi guru.

Why is Transfer Learning the Cool Kid in Town?

Imagine you're a college student, and instead of reading all 100 pages of every textbook (like a normal person), you borrow notes from someone who already studied the material. That’s the magic of transfer learning — you’re borrowing all the “smart” stuff the model learned from its previous tasks to make learning a new one a whole lot quicker and easier.

Here are a few reasons why transfer learning is the coolest thing in machine learning:

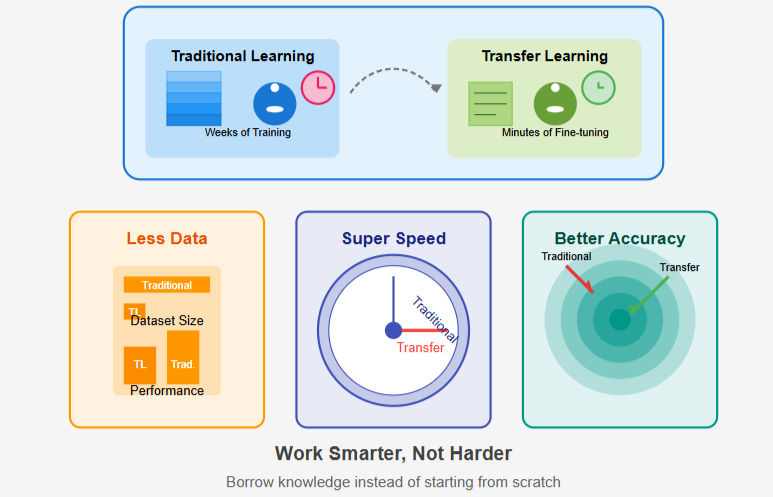

Less Data? No Problem!: Traditional machine learning models require tons of labeled data. But with transfer learning, you can build powerful models with far fewer labeled examples.

Super Speedy Training: Forget spending weeks training a model from scratch. Thanks to transfer learning, you can fine-tune a model in hours or even minutes, depending on your task.

Better Accuracy with Less Work: Pre-trained models come with a bunch of pre-learned features that make your model smarter right out of the box. So, with a little fine-tuning, you can get excellent accuracy with minimal effort. Who doesn't love efficiency?

How Does Transfer Learning Actually Work?

Alright, let’s talk shop. Here’s a simple breakdown of how transfer learning works in practice. No need to get your brain in a twist; I promise it’s not that complicated!

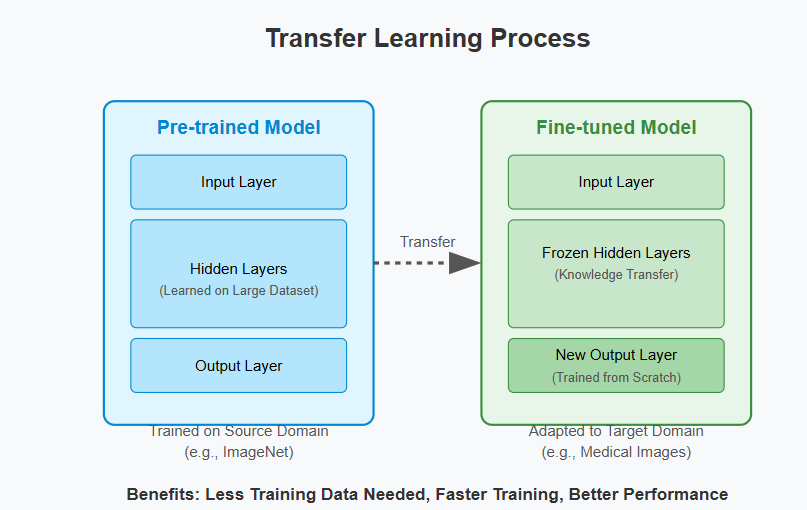

Begin with a Pre-trained Model: Imagine your student who has already learned the fundamentals. You begin with a pre-trained model that has already been trained on a large dataset — such as ImageNet (which contains millions of images of common objects) or BERT (the language model which is already a words master).

Freeze the Front Layers: Your model's front layers are similar to general knowledge (consider them the "learning how to read and write" stage). You then freeze those layers — that is, you leave them as they are since they've already learned helpful things such as edges, color, and patterns.

Fine-Tune the Final Layers: Finally, the best part. You fine-tune the final layers of the model to suit your particular task (such as identifying sushi, or forecasting what cat meme will go viral next).

Train with Your Small Dataset: You don't require a big dataset! Only a handful of labeled images of sushi, and your model can begin classifying with little more training.

Process of Transfer Learning

Real-World Applications: Because AI Isn’t Just for Robots

Transfer learning is more than just a cool skill to brag about to your friends—well, maybe it is. Businesses and organizations use it in the real world to address some very important problems with very little funding. Let's look at few instances:

Imaging in Medicine: Due to transfer learning, AI models can be pre-trained to identify illnesses like pneumonia or cancer with just a few scans, saving doctors the time it would otherwise take to annotate millions of medical pictures. It's similar to having a really smart doctor who is already familiar with the basics of human anatomy!

Self-Driving Cars: It's true that self-driving cars must be able to distinguish a number of objects, such as potholes, pedestrians, and stop signs. However, businesses employ transfer learning to modify pre-trained models on generic images to the peculiarities of driving, rather than training their models from scratch. It's like trying to educate an automobile to be a roadside photographer.

NLP, or natural language processing: Have you ever questioned how, despite your strange accent, your voice assistant (such as Siri or Alexa) seems to understand what you're saying? This is so that pre-trained language models, such as GPT-3, can comprehend and answer your questions with a lot less data than traditional models would require.

Challenges: Don’t Get Too Comfortable

Even while everyone loves the concept of "less work, more efficiency," transfer learning isn't a magic bullet. You still need to be aware of the following:

Domain Shift: A model may become confused if the source job is too dissimilar from the target activity (for example, switching a dog-trained model to one trained on underwater basket weaving). Limit your work to relatively relevant tasks.

Overfitting: If a small dataset is subjected to excessive fine-tuning, the model may memorize the data rather than generalize. Therefore, be sure to utilize appropriate validation procedures and include regularization.

Conclusion: Transfer Learning, AKA “The Shortcut to Smart Models”

In summary, transfer learning is a game-changer in the field of machine learning. Making models smarter with less data is like using your friend's notes to earn an A on your exam (without getting caught). 📝

Therefore, don't freak out the next time you're working on a project with a little dataset. Just keep in mind that your new best buddy is transfer learning. You may begin creating strong, intelligent models right now, bypassing the drawn-out process of collecting limitless amounts of data.

Have you experimented with transfer learning? What is the most absurd thing you have mastered a model to accomplish? Post your experience in the comments below! Telling us about it would be wonderful. 😎

Blog by:

Pratik Gaikwad(124B2B028)

Krishna Shinde (124B2B035)

Mayank Udapurkar (124B2B036)

Good Explanation

Your work is incredible.

Very informative and helpful!

WELL DONE !! Good Explanation !!

You explained this so clearly; I finally understand it now!